In 2019, a Midwest hospital system deployed a sepsis prediction algorithm across its ICUs. Validated on hundreds of thousands of patient records. Strong sensitivity scores. Clinicians briefed that it would save lives — and there was genuine reason to believe this.

Two years later, a research team reviewed the deployment. The model was generating alerts. The alerts were being acknowledged. And then — in a significant fraction of cases — ignored. Not because the staff were negligent. Because the alert rate was high enough that clinicians had learned, through experience, that most alerts didn't correspond to patients who were actually deteriorating.

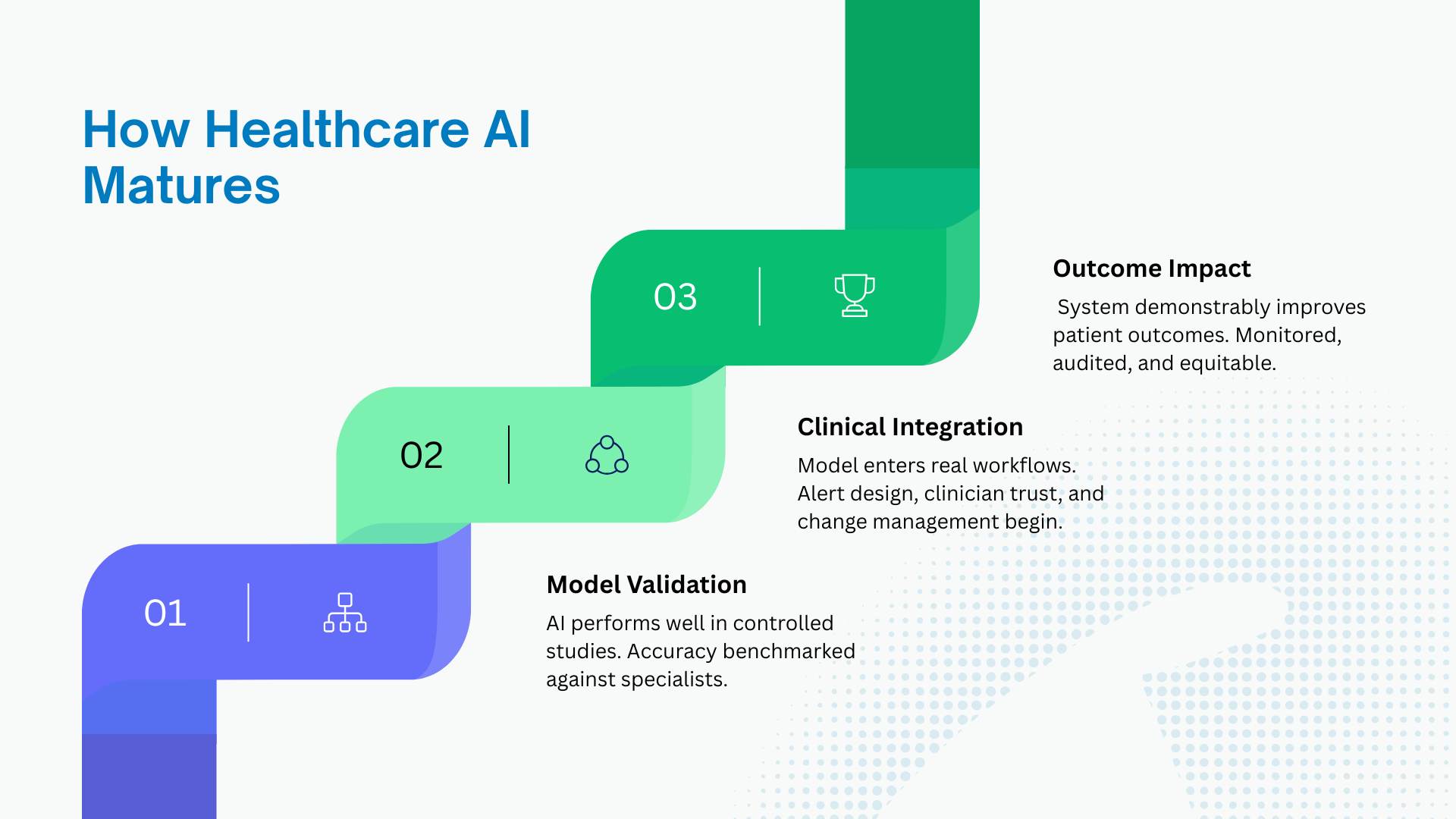

The model worked. The deployment failed. That gap — between a validated model and a deployed system that reliably improves patient outcomes — is the defining challenge of healthcare AI right now. And understanding it, in precise terms, is where the most consequential work in the field is being done.

The State of the Field: Two Truths at Once

The technical progress is real — and understated

In well-defined diagnostic tasks, AI has not just approached specialist-level accuracy. In several domains it has exceeded it, under rigorous conditions, in peer-reviewed studies with independent replication.

Google DeepMind's work on diabetic retinopathy detection, published in Nature in 2016 and subsequently refined, showed performance exceeding that of ophthalmologists on grading fundus photographs. A 2023 study in The Lancet Digital Health demonstrated that AI-assisted colonoscopy detection reduced the miss rate for adenomas by nearly 50% compared to standard colonoscopy. The FDA has cleared over 700 AI/ML-enabled medical devices as of 2024 — a number that has roughly doubled in three years.

For the approximately four billion people on earth without reliable access to specialist care, these are not incremental improvements. A validated retinopathy screening tool that can run on a smartphone camera and a cloud API can reach a patient in rural sub-Saharan Africa or rural India in a way that a retinal specialist physically cannot. The equity implications, approached honestly, are staggering in their positive potential.

The deployment reality is far harder than the headlines suggest

The path from a validated model to a system that reliably improves outcomes is longer, more failure-prone, and more organizationally demanding than the technology's advocates typically acknowledge. The clinical environment is not a controlled research setting. Patients present with comorbidities the training data did not adequately represent. Workflows absorb new tools in ways their designers did not anticipate. The humans in the loop respond to algorithmic outputs with cognitive patterns — automation bias, alert fatigue, diffusion of responsibility — that are well-documented and almost universally underestimated in deployment planning.

A 2022 study in NEJM Catalyst examining AI deployments across 40 US health systems found that fewer than 15% of deployed models had undergone prospective clinical evaluation before going live. Most had been validated retrospectively on historical data and then deployed into operational workflows with minimal structured monitoring. The performance degradation that occurs when a model trained on historical data meets a shifting patient population — a phenomenon researchers call dataset shift — is real, measurable, and largely unmonitored in the majority of production healthcare AI deployments today.

What the organizations getting it right actually do differently

Our research found a consistent pattern among health systems generating measurable outcome improvements from AI. They share almost nothing in terms of model sophistication or vendor choice. They share almost everything in terms of implementation philosophy.

They treat deployment as a clinical change management problem, not a technical integration problem. They measure success by patient outcomes, not model performance metrics. They involve clinical staff in system design from the beginning — not as end users to be trained but as the primary architects of how the tool fits into care delivery. And they build monitoring pipelines that track real-world performance against prospectively defined clinical benchmarks, with clear thresholds for intervention if performance degrades.

This sounds obvious. It is absent in the majority of deployments.

The Tools and Technologies Shaping Production Healthcare AI

Clinical NLP and large language models

The near-term impact of LLMs in healthcare is concentrated in one specific, high-value area: the documentation burden. US physicians spend, on average, nearly two hours on documentation for every hour of direct patient care. The EHR was designed as a billing and compliance system; it has been reluctantly and incompletely adapted for clinical use. The human cost — in burnout, in time taken from patients, in the cognitive load of translating clinical judgment into billing codes — is enormous.

Ambient AI tools are changing this in real time. Nuance DAX Copilot (Microsoft), Abridge, and Suki convert clinical conversations into structured notes in the background of a patient encounter. Early deployments at health systems including UCSF and Sutter Health have shown documentation time reductions of 50–70% in controlled studies.

At the research frontier, Med-PaLM 2 (Google DeepMind) and Med-Gemini have demonstrated performance at or near passing thresholds on the US Medical Licensing Examination. BioMedLM (Stanford) and GatorTron (University of Florida) are domain-specific models trained on clinical text at scale. The capability is no longer the question. The deployment questions — around hallucination risk, liability, consent, and clinical workflow integration — are where the serious work is being done.

Medical imaging AI

This is the most mature corner of healthcare AI, with the longest history of FDA clearances and the strongest evidence base. The underlying architecture has evolved from early CNNs through DenseNets and U-Net variants for segmentation, and is now increasingly dominated by Vision Transformers (ViTs) and hybrid CNN-transformer architectures.

Production tools with significant clinical deployment include Viz.ai (stroke detection, cardiac triage — deployed in over 1,200 US hospitals), Aidoc (radiology workflow prioritization), PathAI (computational pathology, partnered with major pharmaceutical companies for clinical trial endpoint analysis), and Paige.AI (the first FDA-authorized AI for primary diagnosis in anatomic pathology).

For practitioners building in this space, MONAI — the PyTorch-based open-source framework for medical imaging AI developed through a collaboration between NVIDIA, King's College London, and major academic medical centers — is the standard development toolkit. TotalSegmentator, built on nnU-Net, has become a widely used foundation model for anatomical segmentation. The emerging concept of medical foundation models — large pretrained models adaptable to specific clinical imaging tasks with limited fine-tuning data — is one of the most active research areas in the field, with groups at Stanford, Oxford, and Tübingen publishing significant work in 2023–24.

Predictive analytics and risk stratification

This is the unglamorous, high-impact corner of healthcare AI — the models that tell a care coordinator, on Monday morning, which twenty patients in a panel of two thousand need a phone call this week. Less dramatic than diagnostic AI. Arguably more consequential at a population level.

The technical approaches here are more classical than in imaging: XGBoost, LightGBM, Cox proportional hazards models and their machine learning extensions (DeepSurv, Random Survival Forests), and increasingly temporal transformer models for time-series EHR data. Epic Systems — whose EHR platform covers roughly a third of US hospital beds — has deployed sepsis, deterioration, and readmission prediction models at scale across its customer base. The Epic models are simultaneously the most widely deployed clinical AI in existence and among the most studied for failure modes and bias.

The 2021 STAT News investigation into Epic's sepsis model, which found significant performance variation across patient demographics and institutions, remains one of the most important documents in understanding the gap between validation performance and real-world deployment.

EHR data infrastructure

Most healthcare AI runs on top of messy, fragmented, inconsistently coded EHR data. The standard interoperability framework is HL7 FHIR (Fast Healthcare Interoperability Resources) — the API standard that governs how clinical data is exchanged between systems. The 21st Century Cures Act in the US has mandated FHIR-based data access, making it the unavoidable plumbing of modern health data infrastructure.

For multi-site clinical research, OMOP CDM (the Observational Health Data Sciences and Informatics common data model) is the standard for harmonizing EHR data across institutions. The OHDSI network, running on OMOP, now includes over 300 data partners across 34 countries — the largest federated clinical research network in existence.

Cloud infrastructure for healthcare AI is increasingly standardized around Google Cloud Healthcare API, Microsoft Azure Health Data Services, and AWS HealthLake — each providing FHIR-native data storage, de-identification pipelines, and ML integration. dbt for clinical data transformation, Apache Spark for large-scale EHR processing, and Databricks as an integrated platform are the data engineering stack appearing consistently in production healthcare AI environments.

Federated learning and privacy-preserving AI

One of the most important architectural developments in healthcare AI is the shift toward training models across multiple institutions without centralizing patient data. The motivation is both regulatory — HIPAA in the US, GDPR in Europe — and practical: no single institution has the diversity of patient data needed to train generalizable clinical models.

NVIDIA FLARE (Federated Learning Application Runtime Environment) is the most widely deployed framework for federated clinical AI, used in collaborations including a 2022 Nature Medicine study training a COVID outcome prediction model across 20 health systems across five continents. OpenFL (Intel) and PySyft (OpenMined) are the other significant open-source frameworks. The combination of federated learning with differential privacy — mathematical techniques for bounding how much any individual patient's data can influence a model — is an active research frontier with direct clinical relevance.

The Problem Nobody Wants to Name: Equity in Clinical AI

What the data actually shows

Performance degradation in underrepresented populations is not a theoretical concern in healthcare AI. It is a measured reality in deployed systems, documented in peer-reviewed research, and not yet adequately addressed in the regulatory frameworks that govern medical AI.

A 2019 study in Science by Obermeyer et al. examined a widely used commercial algorithm for identifying patients for high-risk care management programs. The algorithm used healthcare costs as a proxy for health needs. Because Black patients historically receive less care than white patients with equivalent health conditions — a result of access barriers and systemic bias — the algorithm systematically underestimated the health needs of Black patients, generating equivalent risk scores for Black patients who were actually sicker than their white counterparts. The algorithm was not designed to be discriminatory. It was designed to be accurate. The discrimination was embedded in what "accuracy" was defined against.

Subsequent work has documented analogous problems in dermatology AI (training data skewed toward lighter skin tones), in cardiac risk models (underrepresentation of women in training datasets), and in sepsis prediction (performance degradation in elderly and non-English-speaking patients). These are not edge cases. They are structural features of systems trained on data that reflects historical inequities in care access.

What responsible development actually looks like

The organizations doing this work well have made equity evaluation a non-negotiable stage in their model development pipelines — not an optional analysis run after deployment. They stratify performance metrics by race, ethnicity, age, sex, socioeconomic status, and primary language before a model is considered for clinical use. They treat performance disparities as deployment blockers. And they are actively working to source training data that represents the populations their systems will serve, not just the populations whose data is easiest to collect.

This is harder, slower, and more expensive. It is the only version of healthcare AI worth building.

How to Actually Build a Career in This Field

What the job market looks like right now

The healthcare AI job market in 2024–25 is in an interesting moment. General data science hiring has cooled significantly from the 2021–22 peak. Healthcare AI hiring has cooled less — and in specific areas has continued to grow. The FDA's expanded guidance on AI/ML in medical devices, the CMS reimbursement codes introduced in 2024 for AI-assisted imaging analysis, and the ongoing EHR documentation AI buildout are all creating sustained demand for people who can work at the technical-clinical intersection.

Roles to watch: Clinical AI Engineer, Health Informatics Data Scientist, ML Engineer – Medical Imaging, Clinical NLP Engineer, Real-World Evidence Analyst, and the emerging title of AI Clinical Integration Specialist — which is essentially the role of bridging the gap between validated model and functional clinical deployment. That last role barely existed three years ago. It is now one of the most actively recruited profiles in large health systems.

The technical foundation you need

The skills that actually matter in production healthcare AI differ from what most data science curricula emphasize.

Machine learning fundamentals remain essential, but the specific applications matter: survival analysis for clinical outcome modeling, calibration methods (Platt scaling, isotonic regression) for producing reliable probability estimates, and uncertainty quantification for clinical decision support. A model that says "70% probability of sepsis" needs to actually be right 70% of the time — not just rank patients correctly. Most data scientists trained on standard ML curricula have not engaged seriously with calibration. In healthcare, it is not optional.

Clinical NLP is one of the highest-leverage technical skills in the current market. The ability to work with unstructured clinical text — physician notes, discharge summaries, radiology reports — using both classical approaches (clinical NER, negation detection, UMLS concept extraction) and modern LLM fine-tuning is in acute demand.

EHR data fluency is non-negotiable. Work with real, messy clinical data before claiming you can build models on it. The MIMIC-IV dataset — MIT's critical care database, freely available after completing a brief training course — is the standard entry point. Build something on it. Hit all the data quality problems. That experience is worth more than any certification.

The clinical context you cannot skip

The most common failure mode of technically skilled candidates entering healthcare is treating clinical data like any other tabular dataset. It is not. A creatinine value means something specific. A gap in medication records might mean the patient stopped taking the drug, or it might mean the pharmacist used a different NDC code. The difference between a meaningful feature and a source of data leakage is often clinical knowledge, not statistical intuition.

Invest in developing genuine clinical literacy. This does not mean becoming a clinician. It means understanding the clinical workflows your models will operate inside, the decisions they will inform, and the ways in which a confident wrong answer is different in medicine than it is in product recommendation. The AMIA 10x10 course (American Medical Informatics Association) is the most recognized credential for this kind of foundational clinical informatics knowledge.

The regulatory knowledge that separates serious practitioners

The FDA's Software as a Medical Device (SaMD) framework governs what can be deployed clinically in the US. The distinction between clinical decision support tools that are and are not subject to FDA oversight is genuinely complex and has significant implications for what can be built and deployed. The EU AI Act has placed high-risk classification on most clinical AI applications, with implications for transparency, auditability, and human oversight requirements that are still being worked out in practice.

Reading the FDA's 2023 draft guidance on AI/ML-based SaMD is not glamorous. It is the kind of thing that separates practitioners who can operate at the serious end of this field from those who cannot.

Communities and resources worth your time

Datasets to work with: MIMIC-IV (critical care), PhysioNet (physiological signals), NIH Chest X-ray dataset, TCGA (cancer genomics), UK Biobank (population health)

Competitions that build real skills: RSNA Radiology competitions on Kaggle, SIIM medical imaging challenges, PhysioNet Computing in Cardiology Challenge

Professional communities: AMIA (the professional home of clinical informatics), OHDSI (open-source health data analytics), the Stanford HAI healthcare working group, the Alan Turing Institute's health programme in the UK

Research groups to follow: DeepMind Health, Google Health AI, Stanford AIMI Center, MIT CSAIL Clinical ML Group, UK Biobank research network

Reading: The Lancet Digital Health and npj Digital Medicine are the two journals with the highest signal-to-noise ratio for practical healthcare AI research. NEJM Catalyst for the deployment and organizational side.

The Moment the Field Is Actually In

Healthcare AI is not early. The foundational research is done. The regulatory frameworks — imperfect as they are — exist. Early deployments have generated enough real-world evidence to understand, with reasonable clarity, what works and what does not.

We are at the moment where the primary question is not whether AI can contribute meaningfully to clinical outcomes. The evidence is unambiguous that it can. The question is whether the field has the organizational maturity, the ethical seriousness, and the right people to deploy this technology in ways that are equitable, safe, and genuinely centered on patients rather than on product metrics.

The constraint is not compute. It is not model architecture. It is not even data — there is more clinical data than any current model knows what to do with. The constraint is people who understand both the technical depth and the clinical context, who take the equity problem seriously rather than treating it as a footnote, who can communicate uncertainty to a surgeon and deployment risk to a hospital board, and who hold clearly the distinction between a model that performs on a benchmark and a system that helps a patient.

That combination is rare. The field needs it desperately. And the path to developing it is more accessible right now than it has ever been.

SB Analysis — This piece draws on SB's editorial research into healthcare AI deployment, including peer-reviewed literature from Nature Medicine, The Lancet Digital Health, NEJM Catalyst, and Science; FDA regulatory documentation; and practitioner interviews across US and European health systems conducted in 2023–24. These are our assessments, not original empirical claims.

Explore more writing on topics that matter.